QS LENS #4: The Signature Gap

The migration to post-quantum cryptography is not a single step, but a process.

With the August 2024 NIST finalisation of ML-KEM, and its rapid adoption across browsers, servers, and VPN infrastructure, many organisations can now point to real PQC deployments. The encryption layer is moving. The threat of "harvest now, decrypt later" attacks — where adversaries collect today's traffic to decrypt once a quantum computer exists — is being addressed.

But there is a second migration that has barely started, and its absence leaves organisations in a state that might be described as half-quantum-safe: protected against future decryption, but still fully vulnerable to future forgery.

That second migration is the signature migration. And unlike key encapsulation, it does not fit into a single well-defined slot. It is embedded across the entire infrastructure stack — in certificate chains, firmware images, code-signing pipelines, identity tokens, and legal documents. It touches every team. It requires years of sequenced planning. And it carries consequences that a failed KEM migration does not: a forged signature can impersonate a server, deliver malicious firmware as legitimate, or fabricate credentials across an entire authentication surface.

This issue of QS Lens examines why the signature migration has fallen behind, where the exposure actually lives, and what a structured response looks like for CISOs who want to close the gap before the threat becomes active.

🔐 Encapsulation vs Signatures: why the Migration Timelines diverged

In August 2024, NIST published three quantum-safe standards. One, ML-KEM, handles key encapsulation: establishing a shared secret between two parties over an untrusted channel. The other two, ML-DSA and SLH-DSA handle digital signatures: proving that a certificate, a software update, a firmware image, or a message genuinely came from who it claims, and has not been altered in transit.

These were not the first signature standards. LMS and XMSS had been standardised four years earlier under NIST SP 800-208, specifically for firmware and software signing in high-assurance environments. A fourth family, FN-DSA, remains in draft as FIPS 206, not yet final, but already supported in major cryptographic libraries and worth incorporating into forward-looking architecture decisions.

ML-KEM was adopted rapidly and is already deployed at scale across browsers, servers, and VPN infrastructure whereas the signature algorithms have barely moved. The reasons below explain why:

1️⃣️ One insertion point vs many: ML-KEM fits into an existing well-defined slot in the TLS 1.3 handshake, the key_share extension. Digital signatures have no equivalent clean slot, they are embedded everywhere (X.509 certificates, JWT tokens, firmware authentication chains, code-signing pipelines, OAuth infrastructure…). Replacing one slot is an engineering task. Replacing dozens of embedded locations is an organisational transformation. That is a multi-year programme involving every part of your organisation's infrastructure.

2️⃣ Hybrid mode was immediately available for KEM: engineers could deploy ML-KEM alongside X25519 in parallel, both run, both contribute to the session key, and security holds as long as either survives. This hybrid path meant no hard cutover, no compatibility break, and no moment of risk. No equivalent finalised hybrid standard exists yet for signatures. You cannot run two signature algorithms in parallel across a certificate chain in the same way.

3️⃣ Backward compatibility was achievable for KEM: a server advertising X25519MLKEM768 automatically falls back to X25519 with clients that do not support the hybrid group. Traffic never breaks. A certificate chain signed with ML-DSA cannot be verified by a system that only knows ECDSA, there is no graceful fallback. The entire verifier must be updated before the signer can migrate.

4️⃣ Signature size growth disrupts infrastructure in ways KEM size growth does not: the ML-KEM hybrid adds roughly 1,200 bytes to a TLS handshake, painful for middleboxes, but a one-time cost per connection. ML-DSA signatures are ~3,309 bytes versus ~64 bytes for ECDSA P-256, a 52x increase that propagates through every certificate in a chain, every signed software package, every firmware image, and every authentication token. Each of those use cases has its own bandwidth, storage, and processing constraints to re-engineer.

5️⃣ KEM touches one protocol layer, signatures touch the trust model: changing the key exchange algorithm affects how a session key is established. Changing the signature algorithm affects how identity and integrity are proven, which is foundational to PKI. A PKI migration must be sequenced root-down: new root CA, then new intermediates, then new leaf certificates, in order, over years. A root CA certificate may carry a 20-year validity period. You cannot shortcut that chain.

6️⃣ Tooling and library support arrived faster for KEM: Chrome 131, Firefox 132, Go 1.24, OpenSSL 3.5, and Java (JEP 527) all shipped X25519MLKEM768 as a default within months of the August 2024 NIST finalisation. ML-DSA support in TLS certificate chains, HSMs, CAs, and PKI management platforms is still maturing. The ecosystem for signature migration is meaningfully less ready.

7️⃣ The regulatory pressure landed on KEM first: the regulatory pressure and operational urgency landed on KEM first because of the fundamental asymmetry between the two threat types. "Harvest now, decrypt later" attacks, where adversaries collect encrypted traffic today to decrypt once a quantum computer exists, create a present-day threat to confidentiality. Data stolen today can be decrypted in the future. NIST has confirmed that authentication systems do not carry this risk: a digital signature is only vulnerable to quantum attack at the moment it is verified, not retroactively. This means forging a signature requires an adversary who already has a working quantum computer, a threat that does not yet exist. The migration timelines are identical, but the threat timelines differ, which is why the urgency felt asymmetric and why KEM attracted investment first even though signature migration is arguably harder.

8️⃣ KEM migration is reversible in a way signature migration is not: if a problem is found with ML-KEM, a system can fall back to its classical component without breaking anything. If a PKI has been migrated to ML-DSA root certificates and a flaw is found, revocation, re-issuance, and re-distribution of an entire certificate hierarchy must happen under time pressure. The stakes of getting signature migration wrong are higher, which makes organisations move more cautiously.

9️⃣ No algorithm consensus problem for KEM - VS four competing families for signatures: there is effectively one dominant hybrid KEM for TLS (X25519MLKEM768). On the opposite, organisations face a three-way strategic choice for signatures , ML-DSA for general use, SLH-DSA for conservative long-term use, LMS/XMSS for firmware under CNSA 2.0 , plus FN-DSA on the horizon as a draft standard for applications where signature size matters most (ex: IoT devices, embedded systems, ...). That complexity slows decision-making. Organisations that cannot agree on which signature algorithm to adopt cannot begin migration.

🔟 KEM migration required one team, signature migration requires every team: deploying X25519MLKEM768 in TLS was largely the responsibility of network and security engineering teams. Signature migration touches infrastructure security (PKI), software delivery (code signing), identity (OAuth/JWT), legal (document signing), embedded systems (firmware), and procurement (HSM and device vendor readiness). Coordinating that many stakeholders across a multi-year programme is an organisational challenge that has no equivalent on the KEM side.

These ten asymmetries explain why the migration timelines diverged. But they also point to a more immediate operational problem: before any organisation can begin closing the Signature Gap, it must first understand where its signatures actually live. That inventory is rarely as straightforward as it appears.

🗂️ The hidden Inventory: six Places Signatures are hiding

The practical challenge for most organisations is not algorithm selection, it is finding every place a digital signature is used. Unlike key exchange, which lives primarily in TLS, signatures appear across the entire infrastructure stack. A Cryptographic Bill of Materials (CBOM) built only from dependency scanning will miss many of them. Here is where you can locate hidden signatures:

✅ X.509 certificate chains , TLS, S/MIME, document signing: every tier of the PKI (root → intermediate → leaf) carries the signature algorithm, and migrating it requires rebuilding trust from the root down over years, not days.

✅ Code and firmware signing , CI/CD pipelines, OS update mechanisms, embedded firmware: these are often the highest-risk targets because firmware lifetimes can exceed 10–15 years, and a classical signature on a firmware image today is a liability that will last until the device is decommissioned.

✅ JWT and OAuth tokens, identity and access management infrastructure: every token validator in your environment must be updated to verify PQC signatures before the signing side can migrate, creating a sequencing challenge across potentially hundreds of service integrations.

✅ Document integrity, PDF signatures, legal archives, long-lived contracts: signed documents may need to remain verifiable for decades. A contract signed today with ECDSA may need to be re-signed before its legal validity window closes.

✅ Software supply chain, package manager signatures, container image signing, SBOM attestations: the weakest link in any supply chain is the vendor with the most classical cryptographic exposure.

✅ Hardware root of trust, secure boot chains, HSM-backed signing keys: the most operationally complex migration of all, requiring firmware updates to hardware security modules, TPMs, and secure enclaves that may not yet support PQC.

👉🏽 At QuRISK, we think that firmware and long-lived documents may need signature migration sooner than TLS certificates. The urgency is inverted from what most migration planning assumes, the least visible components often carrying the highest risk.

⚠️ Why Half your PQC Migration may leave you fully exposed

Migrating key encapsulation secures one thing: the confidentiality of data in transit. It prevents an adversary from harvesting encrypted traffic today and decrypting it once a quantum computer exists. That protection is real, and it explains why KEM migration attracted urgent attention first.

But confidentiality is only half of what cryptography does. The other half is authentication and integrity, the ability to prove that a certificate, a firmware image, a software update, or a token genuinely came from who it claims. That function depends entirely on digital signatures. And classical signatures remain fully breakable by any adversary with a sufficiently powerful quantum computer.

In a KEM-only migration, the attack surface that remains open is substantial. TLS certificates can be forged, allowing an adversary to impersonate any server in your infrastructure. Firmware update pipelines can be compromised, with malicious code delivered and accepted as legitimate. Identity tokens, JWTs, SAML assertions, client certificates, can be fabricated, granting access across your entire authentication surface. Long-lived signed documents face retroactive repudiation: an adversary can either challenge an existing signature or produce a forged document that appears equally valid.

The critical asymmetry is this: unlike encrypted data, signed artefacts cannot be harvested today and attacked later. Signature forgery requires a quantum computer to be operational. The threat is not yet active, but the preparation window is precisely as long as the time until one exists. PKI migrations and firmware signing overhauls each take years. The organisations that begin now will finish in time. Those that wait until the threat becomes active will not.

Completing KEM migration is genuinely important work. But it secures secrets while leaving identity unprotected. In a world where a quantum computer exists, a classical signature on a quantum-safe-encrypted message offers no meaningful protection at all.

“The envelope is sealed but the sender’s identity can still be forged”.

👉 The strategic implication for a CISO:

If your organisation has started ML-KEM deployment and is reporting PQC progress, that progress is real, but it only covers confidentiality. The authentication and integrity side of your cryptographic posture is almost certainly still entirely classical. That is what the Signature Gap means. And closing it is not a single decision: it requires choosing between ML-DSA, SLH-DSA, and LMS/XMSS depending on what you are signing, how long it needs to stay valid, what regulatory framework you operate under, and whether your infrastructure can reliably maintain signing state. Most organisations have not yet made that assessment.

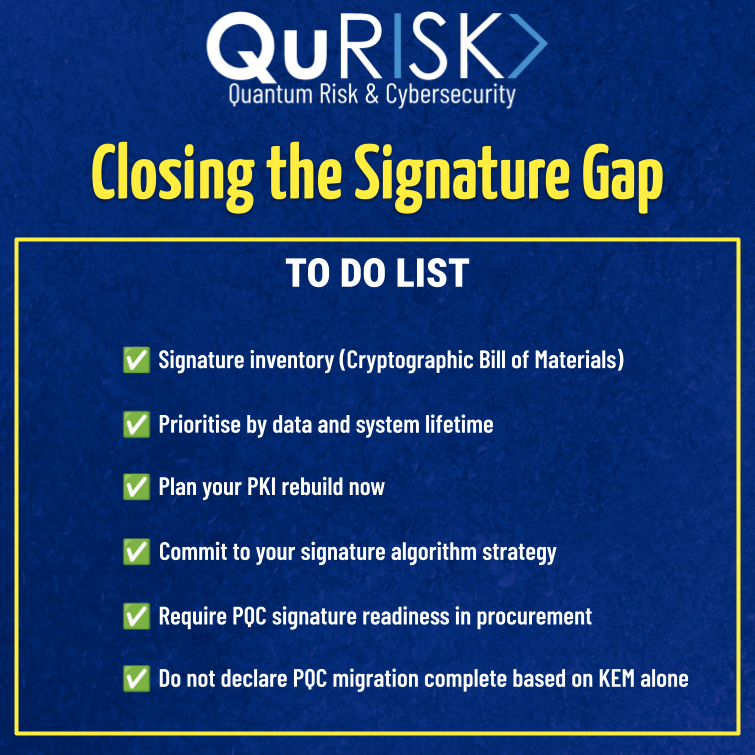

💡 Our 2026 recommended Action List for CISOs to close the Signature Gap

To move from a half-migrated to a fully quantum-safe posture, we recommend the following structured approach:

Signature inventory (Cryptographic Bill of Materials, CBOM): do not rely on dependency scanning alone. Conduct source-level detection to find hardcoded signature algorithms in copied code, modified libraries, and internal components. Map all six categories: PKI, firmware, code signing, JWT/OAuth, documents, and hardware root of trust.

Prioritise by data and system lifetime: firmware and long-lived documents need to move before TLS certificates. Classify every signed artifact by how long it must remain verifiable, then sequence migrations accordingly.

Plan your PKI rebuild now: even if leaf certificate rotation is years away, a root CA migration takes time. Begin the architectural planning for your new PQC root CA today, before regulatory deadlines force a rushed timeline.

Commit to your signature algorithm strategy: list the different use cases (general authentication, long-lived archives, root CA certificates, firmware and software signing…), map them with the most appropriate signature algorithms, each with its own security assumptions, operational requirements, and infrastructure dependencies. The right choice for one environment may be the wrong choice for another. What matters is that you make an informed, deliberate decision for each use case before you begin implementation. Retrofitting an algorithm choice across PKI and firmware infrastructure is far more costly than selecting correctly at the outset.

Require PQC signature readiness in procurement: all new HSM, TPM, smart card, and embedded device procurement should specify ML-DSA and SLH-DSA support. Vendors that cannot confirm their roadmap for PQC signature support represent a supply chain risk.

Do not declare PQC migration complete based on KEM alone: update your internal metrics, board reporting, and compliance documentation to track signature migration progress separately from key encapsulation. A KEM migration completion rate of 100% with a signature migration rate of 0% is not a success, it is a half-migrated state.

🎁 Here is a memo you can download (right-click) to keep our main recommendations in mind.

Closing the Signature Gap, by QuRISK

⚡️QS LENS : TURNING QUANTUM & CYBERSECURITY NEWS INTO KNOWLEDGE⚡️

QS Lens is a series proposed by The Quantum-Safe Sentinel: concise, knowledge-driven articles that dive into recent quantum cybersecurity developments.

🌟 If you appreciate our work for the community, please like and share our publications, follow our page, and promote it to colleagues who may benefit from these insights.

☎️ If you have any suggestions or comments about our publications, or if you would like to discuss these important topics with one of our experts, feel free to book a meeting at www.qurisk.fr or to contact us (contact@qurisk.fr).

📡 Stay tuned for more to come. Stay healthy, and quantum-safe to you all.

🦉 This bulletin is powered by oQo, QuRISK’s Quantum Virtual Advisor: an AI-driven LLM designed to augment professionals on quantum technology–related themes, including securing adoption, risk management, and cybersecurity.

It is published by QuRISK - Quantum Risk Advisory, a French firm specialized in Quantum Risk & Cybersecurity.